Where is Standardizing used in the Real World?

- Ashish Sharma

- Apr 24

- 2 min read

As part of my role training and consulting companies on data skills I often train them to use Machine Learning models to predict outcomes.

Machine Learning models are a subset of AI models and essentially take in a lot of data and learn the patterns and behaviours from this to predict outcomes on new data. Think of your line of best fit from GCSE, in machine learning we call that Linear Regression and it is used in many different applications.

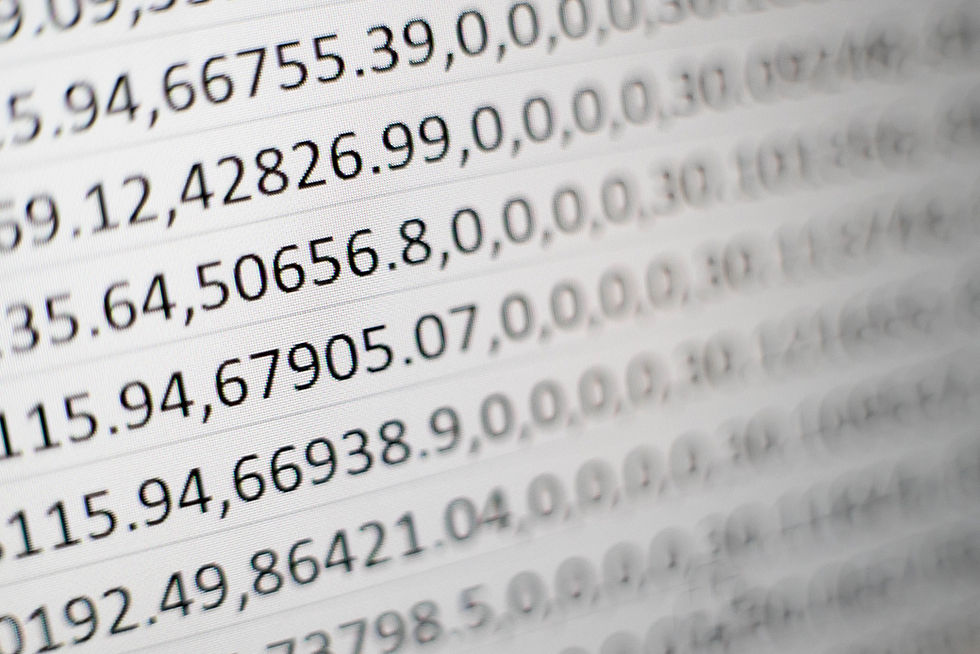

However one recent session with a client explored logistic regression and we explored a dataset which looked like this (extract of top 5 rows shown):

The dataset has 30 columns of data from a study of 569 patients and X-ray images of a supposed tumour. The prediction model being built would allow us to examine which factors contribute towards an image being classified as Malignant or Benign.

Before feeding this data into the model you can clearly see that the columns of data have a variety of numerical values that range from small decimals up to numbers in 4 digits. For machine learning models this is not ideal and can lead to bias towards the larger numbers and this can lead to output issues.

To prevent this from occurring we use standardization- in particular the coding formula you learnt from the Standard Normal Distribution:

We apply a Python code called StandardScaler() that essentially applies this to our whole dataset and transforms all the numeric columns so they have a mean of 0 and a standard deviation of 1. Evidence of this is below:

This is a real life application of standardization in a Machine Learning context, all based on a formula you learnt in year 13 Normal Distribution.

Without standardisation, a machine learning model might think one variable is “more important” simply because the numbers are larger, not because it actually has a stronger relationship with the outcome. This is very similar to why unstandardised exam scores can be misleading when comparing performance across subjects.

Seeing standardisation used in machine learning helps show that A‑level statistics is not just about passing exams. The same ideas you learn when working with means, standard deviations and z‑scores are used in real systems that make predictions, spot patterns and support decision‑making in the modern world.

In short, standardisation is a great example of how A‑level maths underpins modern data science and artificial intelligence, turning familiar classroom techniques into powerful real‑world tools.

Comments